Clixbee v1: Picd

Once the camera roll problem felt real, I started building. The app would eventually be called Clixbee, but it began as Picd. The name focused on the outcome I wanted: the app pick the single best photo from your most recent shots.

That meant I needed a way to score a photo, assign a socre, and sort by that score. Higher score meant better photo, at least in theory or perhaps from a technical perspective.

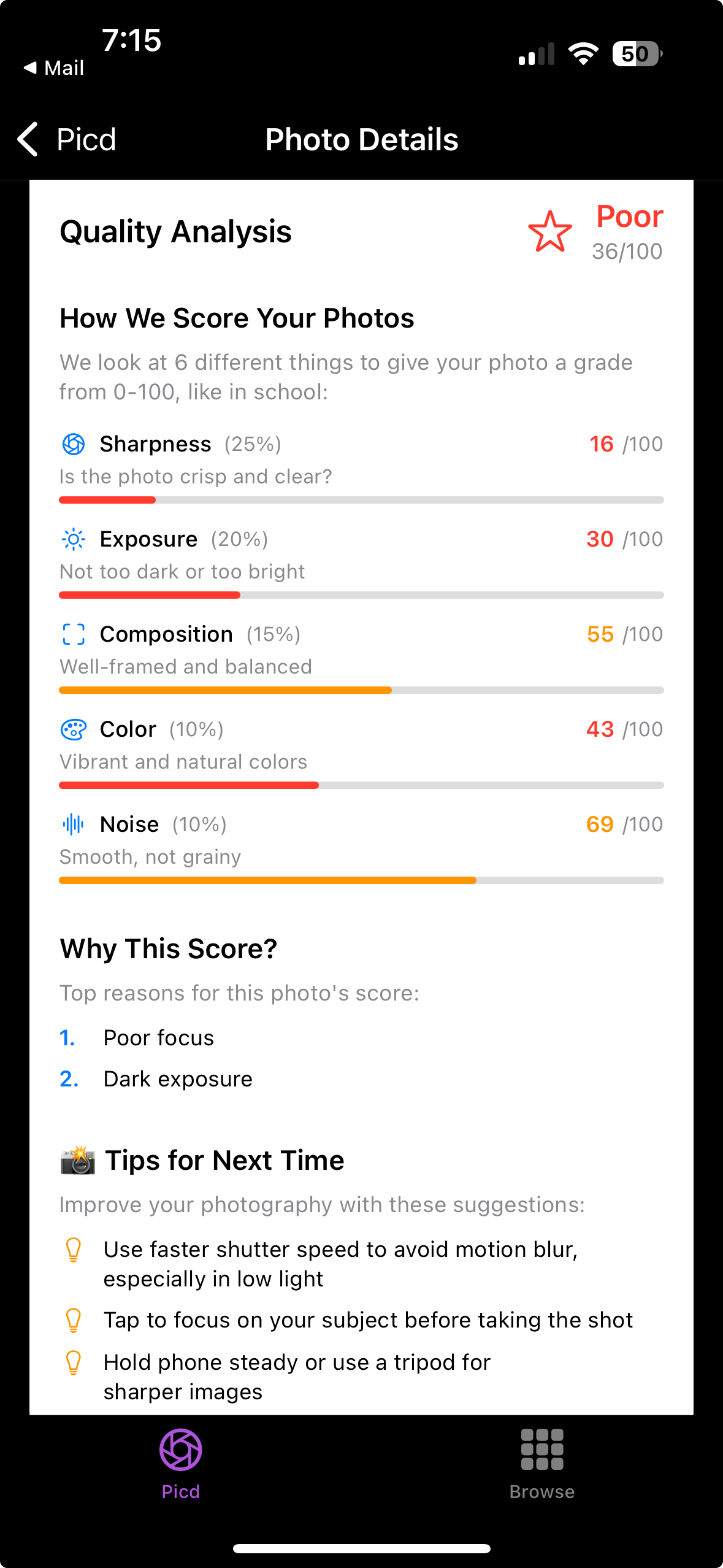

I leaned into what Apple already provided. Using Vision and related image APIs, I could evaluate lighting, sharpness, faces, and composition without training a custom model. The first scoring pass weighted six signals:

- Sharpness (25%)

- Exposure (20%)

- Face quality (20%)

- Composition (15%)

- Color (10%)

- Noise (10%)

Each analyzer returned a normalized value. The scoring engine applied weights and produced a composite grade from 0 to 100. The app then sorted the recent batch and returned the top five. The proof of concept was really easy to kickstart with modern tools like Goose and ChatGPT, coupled with my experience building iOS apps.

The highest scoring images were usually strong. The model could catch subtle blur or poor lighting that I might miss while scrolling quickly. I did notice, however, that most of my photos were rated lower than I expected. The system was too strict, too technical. It treated soft focus and low light as hard penalties. The numbers were consistent, but not always returning "the best photo".

How can I improve the scoring?

Technically, the first version started as a single large file inside Xcode. It worked, but every change meant touching unrelated code. Adjusting exposure meant scanning past composition logic. AI brought some speed to iterating but the pitfalls of a messy code base were still real.

I refactored the structure into a small scoring engine with an orchestrator at the center. The orchestrator handled image ingestion and sequencing, then delegated to six focused analyzers. Each analyzer returned a score and the orchestrator combined them, applied the weights, and generated the explanation shown in the detail view. That separation made iteration much easier and as an example, I was able to rebalance face quality without rewriting sharpness. I was also able to adjust noise sensitivity without affecting composition scoring.

Around this time I was also paying attention to how I was building. Modern AI tools lowered the barrier to experimentation. I built and ran everything locally in Xcode, but continued to use Goose with Claude Sonnet as my model to code and suggest refactors. I could test scoring variations in hours instead of weeks and the scores for photos that were subjectively good we're getting higher.

That early refactored engine, with minor tuning, still powers Clixbee today. The next step was a little less obvious until I started testing in the field. I'll cover that next!